С версии 2.9.x

На этой странице

Обновление клиентского кластера

Если в клиентском кластере был включен “Модуль локального сбора метрик”, а в кластере управления “Модуль мониторинга. Компонент централизованного сбора метрик”, то после завершения обновления клиентского кластера с версии 2.9.Х на 2.10.0 и выше необходимо выполнить действия:

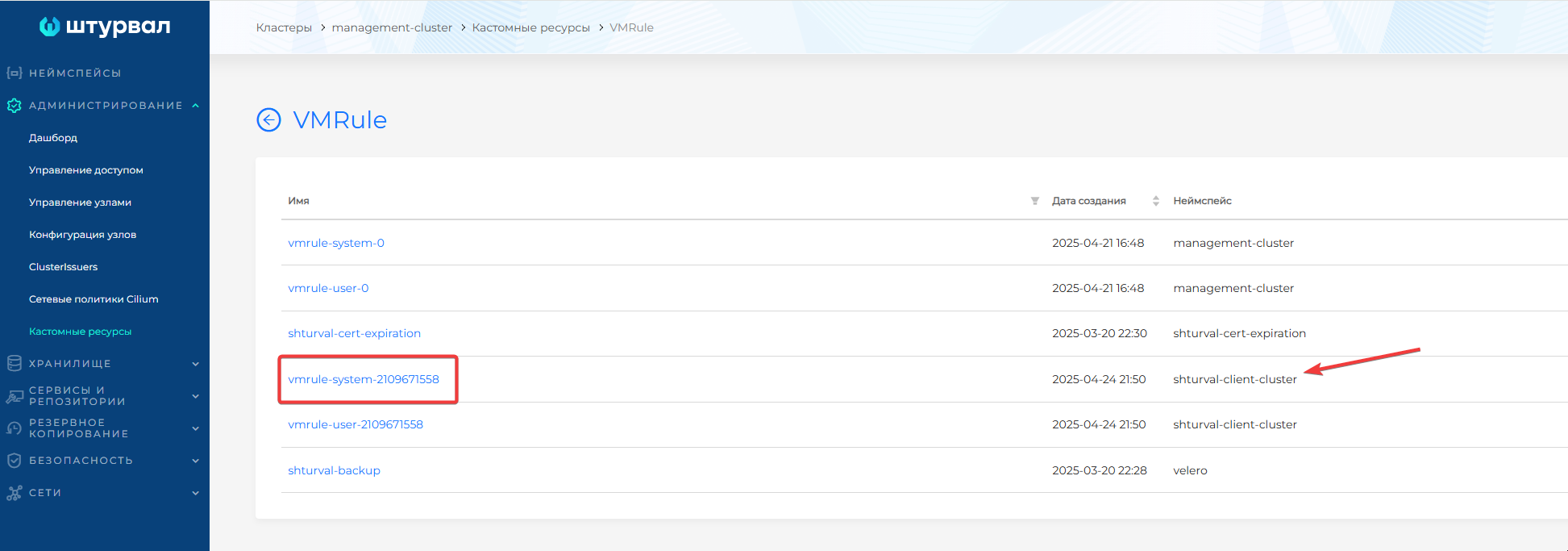

- В графическом интерфейсе кластера управления перейдите на страницу Кастомные ресурсы раздела Администрирование. Найдите и откройте API-группу

operator.victoriametrics.com; - Перейдите в список объектов типа

VMRule; - В таблице найдите VMRule с префиксом

systemв неймспейсе с именем клиентского кластера;

Скриншот

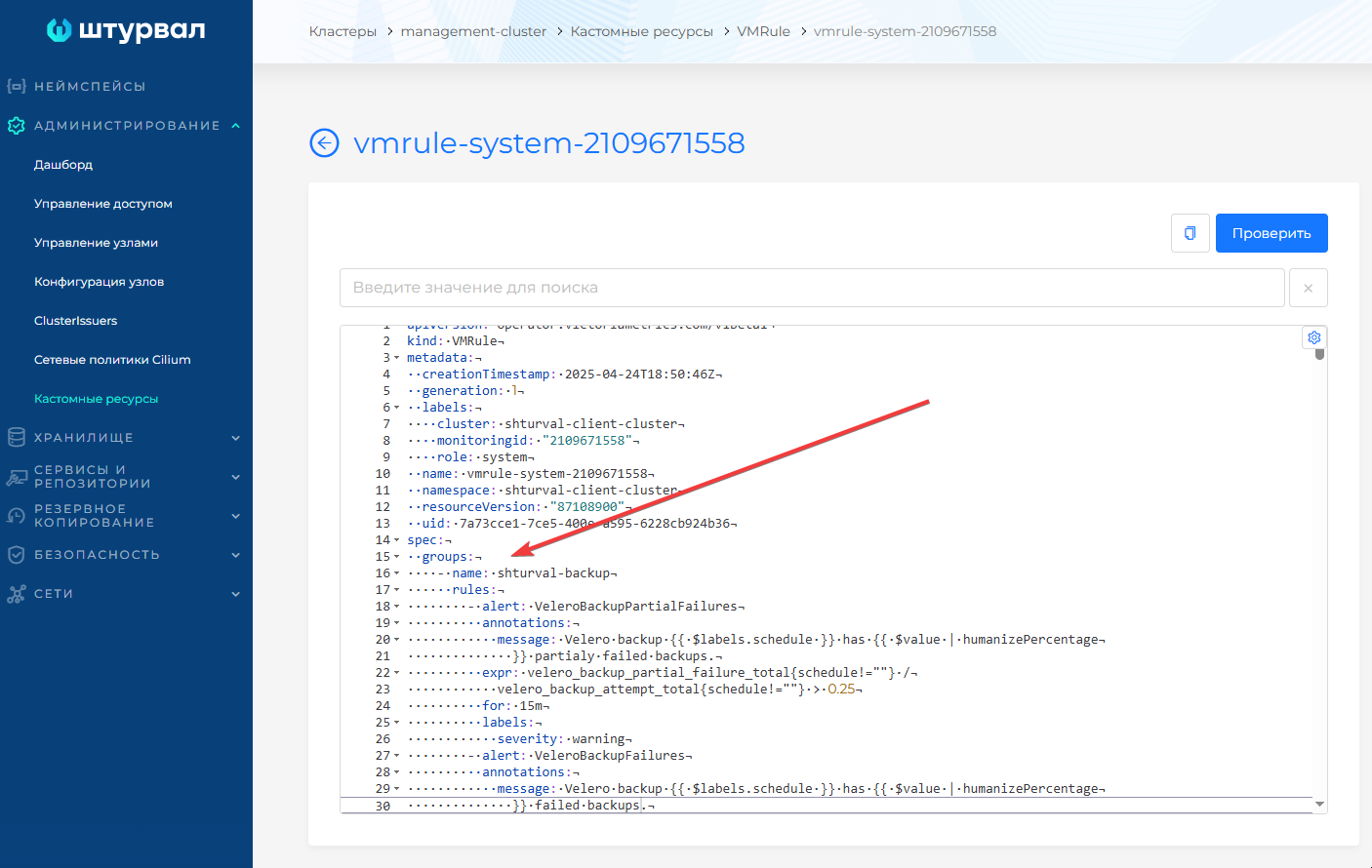

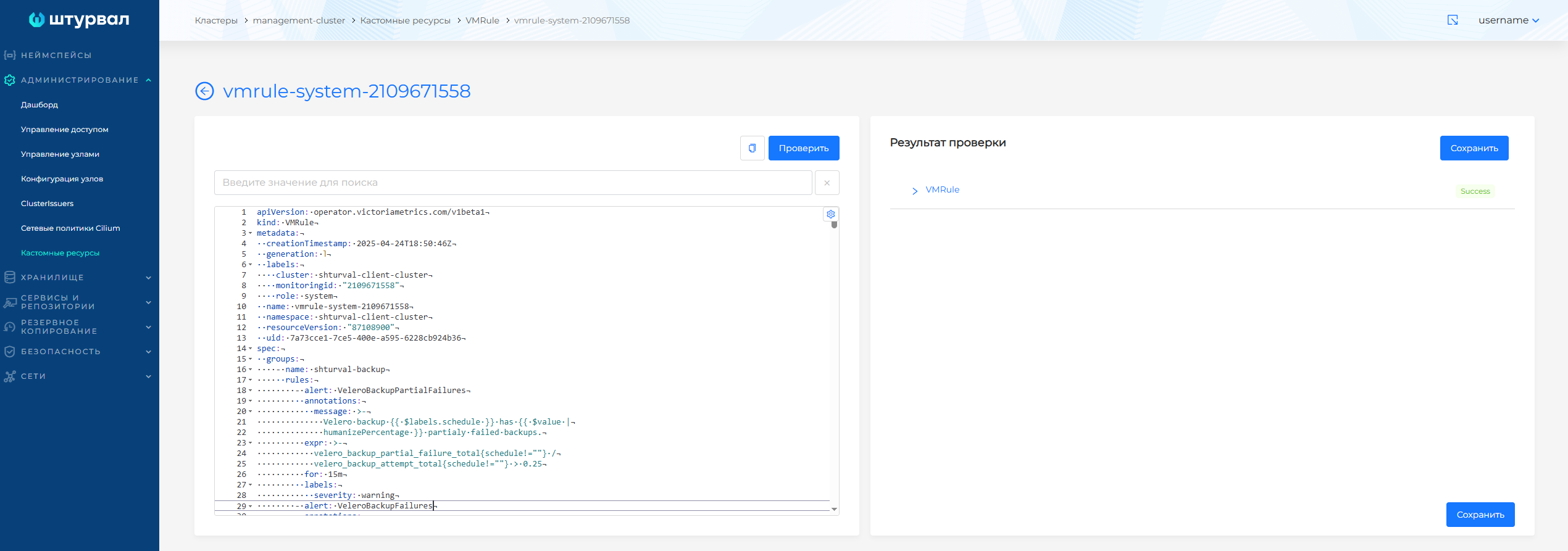

- Перейдите в манифест VMRule с префиксом

systemв неймспейсе с именем клиентского кластера; - Замените блок

specна содержимое, указанное в блоке “Обновленная часть spec”. Выполните проверку, нажав кнопку Проверить, затем сохраните изменения:

Скриншот

Обновленная часть spec

spec:

groups:

- name: shturval-backup

rules:

- alert: VeleroBackupPartialFailures

annotations:

message: >-

Velero backup {{ $labels.schedule }} has {{ $value |

humanizePercentage }} partialy failed backups.

expr: >-

velero_backup_partial_failure_total{schedule!=""} /

velero_backup_attempt_total{schedule!=""} > 0.25

for: 15m

labels:

severity: warning

- alert: VeleroBackupFailures

annotations:

message: >-

Velero backup {{ $labels.schedule }} has {{ $value |

humanizePercentage }} failed backups.

expr: >-

velero_backup_failure_total{schedule!=""} /

velero_backup_attempt_total{schedule!=""} > 0.25

for: 15m

labels:

severity: warning

- name: x509-certificate-exporter.rules

rules:

- alert: X509ExporterReadErrors

annotations:

description: >-

Over the last 15 minutes, this x509-certificate-exporter instance

has experienced errors reading certificate files or querying the

Kubernetes API. This could be caused by a misconfiguration if

triggered when the exporter starts.

summary: Increasing read errors for x509-certificate-exporter

expr: delta(x509_read_errors[15m]) > 0

for: 5m

labels:

severity: warning

- alert: CertificateError

annotations:

description: >-

Certificate could not be decoded {{if $labels.secret_name }}in

Kubernetes secret "{{ $labels.secret_namespace }}/{{

$labels.secret_name }}"{{else}}at location "{{ $labels.filepath

}}"{{end}}

summary: Certificate cannot be decoded

expr: x509_cert_error > 0

for: 15m

labels:

severity: warning

- alert: CertificateRenewal

annotations:

description: >-

Certificate for "{{ $labels.subject_CN }}" should be renewed {{if

$labels.secret_name }}in Kubernetes secret "{{

$labels.secret_namespace }}/{{ $labels.secret_name }}"{{else}}at

location "{{ $labels.filepath }}"{{end}}

summary: Certificate should be renewed

expr: (x509_cert_not_after - time()) < (28 * 86400)

for: 15m

labels:

severity: warning

- alert: CertificateExpiration

annotations:

description: >-

Certificate for "{{ $labels.subject_CN }}" is about to expire

after {{ humanizeDuration $value }} {{if $labels.secret_name }}in

Kubernetes secret "{{ $labels.secret_namespace }}/{{

$labels.secret_name }}"{{else}}at location "{{ $labels.filepath

}}"{{end}}

summary: Certificate is about to expire

expr: (x509_cert_not_after - time()) < (14 * 86400)

for: 15m

labels:

severity: critical

- name: alertmanager.rules

rules:

- alert: AlertmanagerFailedReload

annotations:

description: >-

Configuration has failed to load for {{ $labels.namespace }}/{{

$labels.pod}}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/alertmanager/alertmanagerfailedreload

summary: Reloading an Alertmanager configuration has failed.

expr: >-

# Without max_over_time, failed scrapes could create false

negatives, see

#

https://www.robustperception.io/alerting-on-gauges-in-prometheus-2-0

for details.

max_over_time(alertmanager_config_last_reload_successful{job="vmalertmanager-shturval-metrics-collector",namespace="victoria-metrics"}[5m])

== 0

for: 10m

labels:

severity: critical

- alert: AlertmanagerMembersInconsistent

annotations:

description: >-

Alertmanager {{ $labels.namespace }}/{{ $labels.pod}} has only

found {{ $value }} members of the {{$labels.job}} cluster.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/alertmanager/alertmanagermembersinconsistent

summary: >-

A member of an Alertmanager cluster has not found all other

cluster members.

expr: >-

# Without max_over_time, failed scrapes could create false

negatives, see

#

https://www.robustperception.io/alerting-on-gauges-in-prometheus-2-0

for details.

max_over_time(alertmanager_cluster_members{job="vmalertmanager-shturval-metrics-collector",namespace="victoria-metrics"}[5m])

< on (namespace,service,cluster) group_left

count by (namespace,service,cluster) (max_over_time(alertmanager_cluster_members{job="vmalertmanager-shturval-metrics-collector",namespace="victoria-metrics"}[5m]))

for: 15m

labels:

severity: critical

- alert: AlertmanagerFailedToSendAlerts

annotations:

description: >-

Alertmanager {{ $labels.namespace }}/{{ $labels.pod}} failed to

send {{ $value | humanizePercentage }} of notifications to {{

$labels.integration }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/alertmanager/alertmanagerfailedtosendalerts

summary: An Alertmanager instance failed to send notifications.

expr: |-

(

rate(alertmanager_notifications_failed_total{job="vmalertmanager-shturval-metrics-collector",namespace="victoria-metrics"}[5m])

/

ignoring (reason) group_left rate(alertmanager_notifications_total{job="vmalertmanager-shturval-metrics-collector",namespace="victoria-metrics"}[5m])

)

> 0.01

for: 5m

labels:

severity: warning

- alert: AlertmanagerClusterFailedToSendAlerts

annotations:

description: >-

The minimum notification failure rate to {{ $labels.integration }}

sent from any instance in the {{$labels.job}} cluster is {{ $value

| humanizePercentage }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/alertmanager/alertmanagerclusterfailedtosendalerts

summary: >-

All Alertmanager instances in a cluster failed to send

notifications to a critical integration.

expr: |-

min by (namespace,service,integration,cluster) (

rate(alertmanager_notifications_failed_total{job="vmalertmanager-shturval-metrics-collector",namespace="victoria-metrics", integration=~`.*`}[5m])

/

ignoring (reason) group_left rate(alertmanager_notifications_total{job="vmalertmanager-shturval-metrics-collector",namespace="victoria-metrics", integration=~`.*`}[5m])

)

> 0.01

for: 5m

labels:

severity: critical

- alert: AlertmanagerClusterFailedToSendAlerts

annotations:

description: >-

The minimum notification failure rate to {{ $labels.integration }}

sent from any instance in the {{$labels.job}} cluster is {{ $value

| humanizePercentage }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/alertmanager/alertmanagerclusterfailedtosendalerts

summary: >-

All Alertmanager instances in a cluster failed to send

notifications to a non-critical integration.

expr: |-

min by (namespace,service,integration,cluster) (

rate(alertmanager_notifications_failed_total{job="vmalertmanager-shturval-metrics-collector",namespace="victoria-metrics", integration!~`.*`}[5m])

/

ignoring (reason) group_left rate(alertmanager_notifications_total{job="vmalertmanager-shturval-metrics-collector",namespace="victoria-metrics", integration!~`.*`}[5m])

)

> 0.01

for: 5m

labels:

severity: warning

- alert: AlertmanagerConfigInconsistent

annotations:

description: >-

Alertmanager instances within the {{$labels.job}} cluster have

different configurations.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/alertmanager/alertmanagerconfiginconsistent

summary: >-

Alertmanager instances within the same cluster have different

configurations.

expr: |-

count by (namespace,service,cluster) (

count_values by (namespace,service,cluster) ("config_hash", alertmanager_config_hash{job="vmalertmanager-shturval-metrics-collector",namespace="victoria-metrics"})

)

!= 1

for: 20m

labels:

severity: critical

- alert: AlertmanagerClusterDown

annotations:

description: >-

{{ $value | humanizePercentage }} of Alertmanager instances within

the {{$labels.job}} cluster have been up for less than half of the

last 5m.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/alertmanager/alertmanagerclusterdown

summary: >-

Half or more of the Alertmanager instances within the same cluster

are down.

expr: |-

(

count by (namespace,service,cluster) (

avg_over_time(up{job="vmalertmanager-shturval-metrics-collector",namespace="victoria-metrics"}[5m]) < 0.5

)

/

count by (namespace,service,cluster) (

up{job="vmalertmanager-shturval-metrics-collector",namespace="victoria-metrics"}

)

)

>= 0.5

for: 5m

labels:

severity: critical

- alert: AlertmanagerClusterCrashlooping

annotations:

description: >-

{{ $value | humanizePercentage }} of Alertmanager instances within

the {{$labels.job}} cluster have restarted at least 5 times in the

last 10m.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/alertmanager/alertmanagerclustercrashlooping

summary: >-

Half or more of the Alertmanager instances within the same cluster

are crashlooping.

expr: |-

(

count by (namespace,service,cluster) (

changes(process_start_time_seconds{job="vmalertmanager-shturval-metrics-collector",namespace="victoria-metrics"}[10m]) > 4

)

/

count by (namespace,service,cluster) (

up{job="vmalertmanager-shturval-metrics-collector",namespace="victoria-metrics"}

)

)

>= 0.5

for: 5m

labels:

severity: critical

- name: etcd

rules:

- alert: etcdMembersDown

annotations:

description: 'etcd cluster "{{ $labels.job }}": members are down ({{ $value }}).'

summary: etcd cluster members are down.

expr: |-

max without (endpoint) (

sum without (instance) (up{job=~".*etcd.*"} == bool 0)

or

count without (To) (

sum without (instance) (rate(etcd_network_peer_sent_failures_total{job=~".*etcd.*"}[120s])) > 0.01

)

)

> 0

for: 10m

labels:

severity: critical

- alert: etcdInsufficientMembers

annotations:

description: >-

etcd cluster "{{ $labels.job }}": insufficient members ({{ $value

}}).

summary: etcd cluster has insufficient number of members.

expr: >-

sum(up{job=~".*etcd.*"} == bool 1) without (instance) <

((count(up{job=~".*etcd.*"}) without (instance) + 1) / 2)

for: 3m

labels:

severity: critical

- alert: etcdNoLeader

annotations:

description: >-

etcd cluster "{{ $labels.job }}": member {{ $labels.instance }}

has no leader.

summary: etcd cluster has no leader.

expr: etcd_server_has_leader{job=~".*etcd.*"} == 0

for: 1m

labels:

severity: critical

- alert: etcdHighNumberOfLeaderChanges

annotations:

description: >-

etcd cluster "{{ $labels.job }}": {{ $value }} leader changes

within the last 15 minutes. Frequent elections may be a sign of

insufficient resources, high network latency, or disruptions by

other components and should be investigated.

summary: etcd cluster has high number of leader changes.

expr: >-

increase((max without (instance)

(etcd_server_leader_changes_seen_total{job=~".*etcd.*"}) or

0*absent(etcd_server_leader_changes_seen_total{job=~".*etcd.*"}))[15m:1m])

>= 4

for: 5m

labels:

severity: warning

- alert: etcdHighNumberOfFailedGRPCRequests

annotations:

description: >-

etcd cluster "{{ $labels.job }}": {{ $value }}% of requests for {{

$labels.grpc_method }} failed on etcd instance {{ $labels.instance

}}.

summary: etcd cluster has high number of failed grpc requests.

expr: >-

100 * sum(rate(grpc_server_handled_total{job=~".*etcd.*",

grpc_code=~"Unknown|FailedPrecondition|ResourceExhausted|Internal|Unavailable|DataLoss|DeadlineExceeded"}[5m]))

without (grpc_type, grpc_code)

/

sum(rate(grpc_server_handled_total{job=~".*etcd.*"}[5m])) without

(grpc_type, grpc_code)

> 1

for: 10m

labels:

severity: warning

- alert: etcdHighNumberOfFailedGRPCRequests

annotations:

description: >-

etcd cluster "{{ $labels.job }}": {{ $value }}% of requests for {{

$labels.grpc_method }} failed on etcd instance {{ $labels.instance

}}.

summary: etcd cluster has high number of failed grpc requests.

expr: >-

100 * sum(rate(grpc_server_handled_total{job=~".*etcd.*",

grpc_code=~"Unknown|FailedPrecondition|ResourceExhausted|Internal|Unavailable|DataLoss|DeadlineExceeded"}[5m]))

without (grpc_type, grpc_code)

/

sum(rate(grpc_server_handled_total{job=~".*etcd.*"}[5m])) without

(grpc_type, grpc_code)

> 5

for: 5m

labels:

severity: critical

- alert: etcdGRPCRequestsSlow

annotations:

description: >-

etcd cluster "{{ $labels.job }}": 99th percentile of gRPC requests

is {{ $value }}s on etcd instance {{ $labels.instance }} for {{

$labels.grpc_method }} method.

summary: etcd grpc requests are slow

expr: >-

histogram_quantile(0.99,

sum(rate(grpc_server_handling_seconds_bucket{job=~".*etcd.*",

grpc_method!="Defragment", grpc_type="unary"}[5m]))

without(grpc_type))

> 0.15

for: 10m

labels:

severity: critical

- alert: etcdMemberCommunicationSlow

annotations:

description: >-

etcd cluster "{{ $labels.job }}": member communication with {{

$labels.To }} is taking {{ $value }}s on etcd instance {{

$labels.instance }}.

summary: etcd cluster member communication is slow.

expr: >-

histogram_quantile(0.99,

rate(etcd_network_peer_round_trip_time_seconds_bucket{job=~".*etcd.*"}[5m]))

> 0.15

for: 10m

labels:

severity: warning

- alert: etcdHighNumberOfFailedProposals

annotations:

description: >-

etcd cluster "{{ $labels.job }}": {{ $value }} proposal failures

within the last 30 minutes on etcd instance {{ $labels.instance

}}.

summary: etcd cluster has high number of proposal failures.

expr: rate(etcd_server_proposals_failed_total{job=~".*etcd.*"}[15m]) > 5

for: 15m

labels:

severity: warning

- alert: etcdHighFsyncDurations

annotations:

description: >-

etcd cluster "{{ $labels.job }}": 99th percentile fsync durations

are {{ $value }}s on etcd instance {{ $labels.instance }}.

summary: etcd cluster 99th percentile fsync durations are too high.

expr: >-

histogram_quantile(0.99,

rate(etcd_disk_wal_fsync_duration_seconds_bucket{job=~".*etcd.*"}[5m]))

> 0.5

for: 10m

labels:

severity: warning

- alert: etcdHighFsyncDurations

annotations:

description: >-

etcd cluster "{{ $labels.job }}": 99th percentile fsync durations

are {{ $value }}s on etcd instance {{ $labels.instance }}.

summary: etcd cluster 99th percentile fsync durations are too high.

expr: >-

histogram_quantile(0.99,

rate(etcd_disk_wal_fsync_duration_seconds_bucket{job=~".*etcd.*"}[5m]))

> 1

for: 10m

labels:

severity: critical

- alert: etcdHighCommitDurations

annotations:

description: >-

etcd cluster "{{ $labels.job }}": 99th percentile commit durations

{{ $value }}s on etcd instance {{ $labels.instance }}.

summary: etcd cluster 99th percentile commit durations are too high.

expr: >-

histogram_quantile(0.99,

rate(etcd_disk_backend_commit_duration_seconds_bucket{job=~".*etcd.*"}[5m]))

> 0.25

for: 10m

labels:

severity: warning

- alert: etcdDatabaseQuotaLowSpace

annotations:

description: >-

etcd cluster "{{ $labels.job }}": database size exceeds the

defined quota on etcd instance {{ $labels.instance }}, please

defrag or increase the quota as the writes to etcd will be

disabled when it is full.

summary: etcd cluster database is running full.

expr: >-

(last_over_time(etcd_mvcc_db_total_size_in_bytes{job=~".*etcd.*"}[5m])

/

last_over_time(etcd_server_quota_backend_bytes{job=~".*etcd.*"}[5m]))*100

> 95

for: 10m

labels:

severity: critical

- alert: etcdExcessiveDatabaseGrowth

annotations:

description: >-

etcd cluster "{{ $labels.job }}": Predicting running out of disk

space in the next four hours, based on write observations within

the past four hours on etcd instance {{ $labels.instance }},

please check as it might be disruptive.

summary: etcd cluster database growing very fast.

expr: >-

predict_linear(etcd_mvcc_db_total_size_in_bytes{job=~".*etcd.*"}[4h],

4*60*60) > etcd_server_quota_backend_bytes{job=~".*etcd.*"}

for: 10m

labels:

severity: warning

- alert: etcdDatabaseHighFragmentationRatio

annotations:

description: >-

etcd cluster "{{ $labels.job }}": database size in use on instance

{{ $labels.instance }} is {{ $value | humanizePercentage }} of the

actual allocated disk space, please run defragmentation (e.g.

etcdctl defrag) to retrieve the unused fragmented disk space.

runbook_url: https://etcd.io/docs/v3.5/op-guide/maintenance/#defragmentation

summary: >-

etcd database size in use is less than 50% of the actual allocated

storage.

expr: >-

(last_over_time(etcd_mvcc_db_total_size_in_use_in_bytes{job=~".*etcd.*"}[5m])

/

last_over_time(etcd_mvcc_db_total_size_in_bytes{job=~".*etcd.*"}[5m]))

< 0.5 and etcd_mvcc_db_total_size_in_use_in_bytes{job=~".*etcd.*"} >

104857600

for: 10m

labels:

severity: warning

- name: general.rules

rules:

- alert: TargetDown

annotations:

description: >-

{{ printf "%.4g" $value }}% of the {{ $labels.job }}/{{

$labels.service }} targets in {{ $labels.namespace }} namespace

are down.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/general/targetdown

summary: One or more targets are unreachable.

expr: >-

100 * (count(up == 0) BY (job,namespace,service,cluster) / count(up)

BY (job,namespace,service,cluster)) > 10

for: 10m

labels:

severity: warning

- alert: Watchdog

annotations:

description: >

This is an alert meant to ensure that the entire alerting pipeline

is functional.

This alert is always firing, therefore it should always be firing

in Alertmanager

and always fire against a receiver. There are integrations with

various notification

mechanisms that send a notification when this alert is not firing.

For example the

"DeadMansSnitch" integration in PagerDuty.

runbook_url: https://runbooks.prometheus-operator.dev/runbooks/general/watchdog

summary: >-

An alert that should always be firing to certify that Alertmanager

is working properly.

expr: vector(1)

labels:

severity: none

- alert: InfoInhibitor

annotations:

description: >

This is an alert that is used to inhibit info alerts.

By themselves, the info-level alerts are sometimes very noisy, but

they are relevant when combined with

other alerts.

This alert fires whenever there's a severity="info" alert, and

stops firing when another alert with a

severity of 'warning' or 'critical' starts firing on the same

namespace.

This alert should be routed to a null receiver and configured to

inhibit alerts with severity="info".

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/general/infoinhibitor

summary: Info-level alert inhibition.

expr: >-

ALERTS{severity = "info"} == 1 unless on (namespace,cluster)

ALERTS{alertname != "InfoInhibitor", severity =~ "warning|critical",

alertstate="firing"} == 1

labels:

severity: none

- name: k8s.rules.container_cpu_limits

rules:

- expr: >-

kube_pod_container_resource_limits{resource="cpu",job="kube-state-metrics"}

* on (namespace,pod,cluster)

group_left() max by (namespace,pod,cluster) (

(kube_pod_status_phase{phase=~"Pending|Running"} == 1)

)

record: cluster:namespace:pod_cpu:active:kube_pod_container_resource_limits

- expr: |-

sum by (namespace,cluster) (

sum by (namespace,pod,cluster) (

max by (namespace,pod,container,cluster) (

kube_pod_container_resource_limits{resource="cpu",job="kube-state-metrics"}

) * on (namespace,pod,cluster) group_left() max by (namespace,pod,cluster) (

kube_pod_status_phase{phase=~"Pending|Running"} == 1

)

)

)

record: namespace_cpu:kube_pod_container_resource_limits:sum

- name: k8s.rules.container_cpu_requests

rules:

- expr: >-

kube_pod_container_resource_requests{resource="cpu",job="kube-state-metrics"}

* on (namespace,pod,cluster)

group_left() max by (namespace,pod,cluster) (

(kube_pod_status_phase{phase=~"Pending|Running"} == 1)

)

record: >-

cluster:namespace:pod_cpu:active:kube_pod_container_resource_requests

- expr: |-

sum by (namespace,cluster) (

sum by (namespace,pod,cluster) (

max by (namespace,pod,container,cluster) (

kube_pod_container_resource_requests{resource="cpu",job="kube-state-metrics"}

) * on (namespace,pod,cluster) group_left() max by (namespace,pod,cluster) (

kube_pod_status_phase{phase=~"Pending|Running"} == 1

)

)

)

record: namespace_cpu:kube_pod_container_resource_requests:sum

- name: k8s.rules.container_cpu_usage_seconds_total

rules:

- expr: >-

sum by (namespace,pod,container,cluster) (

irate(container_cpu_usage_seconds_total{job="kubelet", metrics_path="/metrics/cadvisor", image!=""}[5m])

) * on (namespace,pod,cluster) group_left(node) topk by

(namespace,pod,cluster) (

1, max by (namespace,pod,node,cluster) (kube_pod_info{node!=""})

)

record: >-

node_namespace_pod_container:container_cpu_usage_seconds_total:sum_irate

- name: k8s.rules.container_memory_cache

rules:

- expr: >-

container_memory_cache{job="kubelet",

metrics_path="/metrics/cadvisor", image!=""}

* on (namespace,pod,cluster) group_left(node) topk by

(namespace,pod,cluster) (1,

max by (namespace,pod,node,cluster) (kube_pod_info{node!=""})

)

record: node_namespace_pod_container:container_memory_cache

- name: k8s.rules.container_memory_limits

rules:

- expr: >-

kube_pod_container_resource_limits{resource="memory",job="kube-state-metrics"}

* on (namespace,pod,cluster)

group_left() max by (namespace,pod,cluster) (

(kube_pod_status_phase{phase=~"Pending|Running"} == 1)

)

record: >-

cluster:namespace:pod_memory:active:kube_pod_container_resource_limits

- expr: |-

sum by (namespace,cluster) (

sum by (namespace,pod,cluster) (

max by (namespace,pod,container,cluster) (

kube_pod_container_resource_limits{resource="memory",job="kube-state-metrics"}

) * on (namespace,pod,cluster) group_left() max by (namespace,pod,cluster) (

kube_pod_status_phase{phase=~"Pending|Running"} == 1

)

)

)

record: namespace_memory:kube_pod_container_resource_limits:sum

- name: k8s.rules.container_memory_requests

rules:

- expr: >-

kube_pod_container_resource_requests{resource="memory",job="kube-state-metrics"}

* on (namespace,pod,cluster)

group_left() max by (namespace,pod,cluster) (

(kube_pod_status_phase{phase=~"Pending|Running"} == 1)

)

record: >-

cluster:namespace:pod_memory:active:kube_pod_container_resource_requests

- expr: |-

sum by (namespace,cluster) (

sum by (namespace,pod,cluster) (

max by (namespace,pod,container,cluster) (

kube_pod_container_resource_requests{resource="memory",job="kube-state-metrics"}

) * on (namespace,pod,cluster) group_left() max by (namespace,pod,cluster) (

kube_pod_status_phase{phase=~"Pending|Running"} == 1

)

)

)

record: namespace_memory:kube_pod_container_resource_requests:sum

- name: k8s.rules.container_memory_rss

rules:

- expr: >-

container_memory_rss{job="kubelet",

metrics_path="/metrics/cadvisor", image!=""}

* on (namespace,pod,cluster) group_left(node) topk by

(namespace,pod,cluster) (1,

max by (namespace,pod,node,cluster) (kube_pod_info{node!=""})

)

record: node_namespace_pod_container:container_memory_rss

- name: k8s.rules.container_memory_swap

rules:

- expr: >-

container_memory_swap{job="kubelet",

metrics_path="/metrics/cadvisor", image!=""}

* on (namespace,pod,cluster) group_left(node) topk by

(namespace,pod,cluster) (1,

max by (namespace,pod,node,cluster) (kube_pod_info{node!=""})

)

record: node_namespace_pod_container:container_memory_swap

- name: k8s.rules.container_memory_working_set_bytes

rules:

- expr: >-

container_memory_working_set_bytes{job="kubelet",

metrics_path="/metrics/cadvisor", image!=""}

* on (namespace,pod,cluster) group_left(node) topk by

(namespace,pod,cluster) (1,

max by (namespace,pod,node,cluster) (kube_pod_info{node!=""})

)

record: node_namespace_pod_container:container_memory_working_set_bytes

- name: k8s.rules.pod_owner

rules:

- expr: |-

max by (namespace,workload,pod,cluster) (

label_replace(

label_replace(

kube_pod_owner{job="kube-state-metrics", owner_kind="ReplicaSet"},

"replicaset", "$1", "owner_name", "(.*)"

) * on (replicaset,namespace,cluster) group_left(owner_name) topk by (replicaset,namespace,cluster) (

1, max by (replicaset,namespace,owner_name,cluster) (

kube_replicaset_owner{job="kube-state-metrics"}

)

),

"workload", "$1", "owner_name", "(.*)"

)

)

labels:

workload_type: deployment

record: namespace_workload_pod:kube_pod_owner:relabel

- expr: |-

max by (namespace,workload,pod,cluster) (

label_replace(

kube_pod_owner{job="kube-state-metrics", owner_kind="DaemonSet"},

"workload", "$1", "owner_name", "(.*)"

)

)

labels:

workload_type: daemonset

record: namespace_workload_pod:kube_pod_owner:relabel

- expr: |-

max by (namespace,workload,pod,cluster) (

label_replace(

kube_pod_owner{job="kube-state-metrics", owner_kind="StatefulSet"},

"workload", "$1", "owner_name", "(.*)"

)

)

labels:

workload_type: statefulset

record: namespace_workload_pod:kube_pod_owner:relabel

- expr: |-

max by (namespace,workload,pod,cluster) (

label_replace(

kube_pod_owner{job="kube-state-metrics", owner_kind="Job"},

"workload", "$1", "owner_name", "(.*)"

)

)

labels:

workload_type: job

record: namespace_workload_pod:kube_pod_owner:relabel

- interval: 3m

name: kube-apiserver-availability.rules

rules:

- expr: >-

avg_over_time(code_verb:apiserver_request_total:increase1h[30d]) *

24 * 30

record: code_verb:apiserver_request_total:increase30d

- expr: >-

sum by (code,cluster)

(code_verb:apiserver_request_total:increase30d{verb=~"LIST|GET"})

labels:

verb: read

record: code:apiserver_request_total:increase30d

- expr: >-

sum by (code,cluster)

(code_verb:apiserver_request_total:increase30d{verb=~"POST|PUT|PATCH|DELETE"})

labels:

verb: write

record: code:apiserver_request_total:increase30d

- expr: >-

sum by (verb,scope,le,cluster)

(increase(apiserver_request_sli_duration_seconds_bucket[1h]))

record: >-

cluster_verb_scope_le:apiserver_request_sli_duration_seconds_bucket:increase1h

- expr: >-

sum by (verb,scope,le,cluster)

(avg_over_time(cluster_verb_scope_le:apiserver_request_sli_duration_seconds_bucket:increase1h[30d])

* 24 * 30)

record: >-

cluster_verb_scope_le:apiserver_request_sli_duration_seconds_bucket:increase30d

- expr: >-

sum by (verb,scope,cluster)

(cluster_verb_scope_le:apiserver_request_sli_duration_seconds_bucket:increase1h{le="+Inf"})

record: >-

cluster_verb_scope:apiserver_request_sli_duration_seconds_count:increase1h

- expr: >-

sum by (verb,scope,cluster)

(cluster_verb_scope_le:apiserver_request_sli_duration_seconds_bucket:increase30d{le="+Inf"})

record: >-

cluster_verb_scope:apiserver_request_sli_duration_seconds_count:increase30d

- expr: |-

1 - (

(

# write too slow

sum by (cluster) (cluster_verb_scope:apiserver_request_sli_duration_seconds_count:increase30d{verb=~"POST|PUT|PATCH|DELETE"})

-

sum by (cluster) (cluster_verb_scope_le:apiserver_request_sli_duration_seconds_bucket:increase30d{verb=~"POST|PUT|PATCH|DELETE",le=~"1(\\.0)?"})

) +

(

# read too slow

sum by (cluster) (cluster_verb_scope:apiserver_request_sli_duration_seconds_count:increase30d{verb=~"LIST|GET"})

-

(

(

sum by (cluster) (cluster_verb_scope_le:apiserver_request_sli_duration_seconds_bucket:increase30d{verb=~"LIST|GET",scope=~"resource|",le=~"1(\\.0)?"})

or

vector(0)

)

+

sum by (cluster) (cluster_verb_scope_le:apiserver_request_sli_duration_seconds_bucket:increase30d{verb=~"LIST|GET",scope="namespace",le=~"5(\\.0)?"})

+

sum by (cluster) (cluster_verb_scope_le:apiserver_request_sli_duration_seconds_bucket:increase30d{verb=~"LIST|GET",scope="cluster",le=~"30(\\.0)?"})

)

) +

# errors

sum by (cluster) (code:apiserver_request_total:increase30d{code=~"5.."} or vector(0))

)

/

sum by (cluster) (code:apiserver_request_total:increase30d)

labels:

verb: all

record: apiserver_request:availability30d

- expr: >-

1 - (

sum by (cluster) (cluster_verb_scope:apiserver_request_sli_duration_seconds_count:increase30d{verb=~"LIST|GET"})

-

(

# too slow

(

sum by (cluster) (cluster_verb_scope_le:apiserver_request_sli_duration_seconds_bucket:increase30d{verb=~"LIST|GET",scope=~"resource|",le=~"1(\\.0)?"})

or

vector(0)

)

+

sum by (cluster) (cluster_verb_scope_le:apiserver_request_sli_duration_seconds_bucket:increase30d{verb=~"LIST|GET",scope="namespace",le=~"5(\\.0)?"})

+

sum by (cluster) (cluster_verb_scope_le:apiserver_request_sli_duration_seconds_bucket:increase30d{verb=~"LIST|GET",scope="cluster",le=~"30(\\.0)?"})

)

+

# errors

sum by (cluster) (code:apiserver_request_total:increase30d{verb="read",code=~"5.."} or vector(0))

)

/

sum by (cluster)

(code:apiserver_request_total:increase30d{verb="read"})

labels:

verb: read

record: apiserver_request:availability30d

- expr: >-

1 - (

(

# too slow

sum by (cluster) (cluster_verb_scope:apiserver_request_sli_duration_seconds_count:increase30d{verb=~"POST|PUT|PATCH|DELETE"})

-

sum by (cluster) (cluster_verb_scope_le:apiserver_request_sli_duration_seconds_bucket:increase30d{verb=~"POST|PUT|PATCH|DELETE",le=~"1(\\.0)?"})

)

+

# errors

sum by (cluster) (code:apiserver_request_total:increase30d{verb="write",code=~"5.."} or vector(0))

)

/

sum by (cluster)

(code:apiserver_request_total:increase30d{verb="write"})

labels:

verb: write

record: apiserver_request:availability30d

- expr: >-

sum by (code,resource,cluster)

(rate(apiserver_request_total{job="apiserver",verb=~"LIST|GET"}[5m]))

labels:

verb: read

record: code_resource:apiserver_request_total:rate5m

- expr: >-

sum by (code,resource,cluster)

(rate(apiserver_request_total{job="apiserver",verb=~"POST|PUT|PATCH|DELETE"}[5m]))

labels:

verb: write

record: code_resource:apiserver_request_total:rate5m

- expr: >-

sum by (code,verb,cluster)

(increase(apiserver_request_total{job="apiserver",verb=~"LIST|GET|POST|PUT|PATCH|DELETE",code=~"2.."}[1h]))

record: code_verb:apiserver_request_total:increase1h

- expr: >-

sum by (code,verb,cluster)

(increase(apiserver_request_total{job="apiserver",verb=~"LIST|GET|POST|PUT|PATCH|DELETE",code=~"3.."}[1h]))

record: code_verb:apiserver_request_total:increase1h

- expr: >-

sum by (code,verb,cluster)

(increase(apiserver_request_total{job="apiserver",verb=~"LIST|GET|POST|PUT|PATCH|DELETE",code=~"4.."}[1h]))

record: code_verb:apiserver_request_total:increase1h

- expr: >-

sum by (code,verb,cluster)

(increase(apiserver_request_total{job="apiserver",verb=~"LIST|GET|POST|PUT|PATCH|DELETE",code=~"5.."}[1h]))

record: code_verb:apiserver_request_total:increase1h

- name: kube-apiserver-burnrate.rules

rules:

- expr: >-

(

(

# too slow

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_count{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward"}[1d]))

-

(

(

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward",scope=~"resource|",le=~"1(\\.0)?"}[1d]))

or

vector(0)

)

+

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward",scope="namespace",le=~"5(\\.0)?"}[1d]))

+

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward",scope="cluster",le=~"30(\\.0)?"}[1d]))

)

)

+

# errors

sum by (cluster) (rate(apiserver_request_total{job="apiserver",verb=~"LIST|GET",code=~"5.."}[1d]))

)

/

sum by (cluster)

(rate(apiserver_request_total{job="apiserver",verb=~"LIST|GET"}[1d]))

labels:

verb: read

record: apiserver_request:burnrate1d

- expr: >-

(

(

# too slow

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_count{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward"}[1h]))

-

(

(

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward",scope=~"resource|",le=~"1(\\.0)?"}[1h]))

or

vector(0)

)

+

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward",scope="namespace",le=~"5(\\.0)?"}[1h]))

+

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward",scope="cluster",le=~"30(\\.0)?"}[1h]))

)

)

+

# errors

sum by (cluster) (rate(apiserver_request_total{job="apiserver",verb=~"LIST|GET",code=~"5.."}[1h]))

)

/

sum by (cluster)

(rate(apiserver_request_total{job="apiserver",verb=~"LIST|GET"}[1h]))

labels:

verb: read

record: apiserver_request:burnrate1h

- expr: >-

(

(

# too slow

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_count{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward"}[2h]))

-

(

(

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward",scope=~"resource|",le=~"1(\\.0)?"}[2h]))

or

vector(0)

)

+

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward",scope="namespace",le=~"5(\\.0)?"}[2h]))

+

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward",scope="cluster",le=~"30(\\.0)?"}[2h]))

)

)

+

# errors

sum by (cluster) (rate(apiserver_request_total{job="apiserver",verb=~"LIST|GET",code=~"5.."}[2h]))

)

/

sum by (cluster)

(rate(apiserver_request_total{job="apiserver",verb=~"LIST|GET"}[2h]))

labels:

verb: read

record: apiserver_request:burnrate2h

- expr: >-

(

(

# too slow

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_count{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward"}[30m]))

-

(

(

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward",scope=~"resource|",le=~"1(\\.0)?"}[30m]))

or

vector(0)

)

+

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward",scope="namespace",le=~"5(\\.0)?"}[30m]))

+

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward",scope="cluster",le=~"30(\\.0)?"}[30m]))

)

)

+

# errors

sum by (cluster) (rate(apiserver_request_total{job="apiserver",verb=~"LIST|GET",code=~"5.."}[30m]))

)

/

sum by (cluster)

(rate(apiserver_request_total{job="apiserver",verb=~"LIST|GET"}[30m]))

labels:

verb: read

record: apiserver_request:burnrate30m

- expr: >-

(

(

# too slow

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_count{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward"}[3d]))

-

(

(

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward",scope=~"resource|",le=~"1(\\.0)?"}[3d]))

or

vector(0)

)

+

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward",scope="namespace",le=~"5(\\.0)?"}[3d]))

+

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward",scope="cluster",le=~"30(\\.0)?"}[3d]))

)

)

+

# errors

sum by (cluster) (rate(apiserver_request_total{job="apiserver",verb=~"LIST|GET",code=~"5.."}[3d]))

)

/

sum by (cluster)

(rate(apiserver_request_total{job="apiserver",verb=~"LIST|GET"}[3d]))

labels:

verb: read

record: apiserver_request:burnrate3d

- expr: >-

(

(

# too slow

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_count{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward"}[5m]))

-

(

(

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward",scope=~"resource|",le=~"1(\\.0)?"}[5m]))

or

vector(0)

)

+

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward",scope="namespace",le=~"5(\\.0)?"}[5m]))

+

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward",scope="cluster",le=~"30(\\.0)?"}[5m]))

)

)

+

# errors

sum by (cluster) (rate(apiserver_request_total{job="apiserver",verb=~"LIST|GET",code=~"5.."}[5m]))

)

/

sum by (cluster)

(rate(apiserver_request_total{job="apiserver",verb=~"LIST|GET"}[5m]))

labels:

verb: read

record: apiserver_request:burnrate5m

- expr: >-

(

(

# too slow

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_count{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward"}[6h]))

-

(

(

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward",scope=~"resource|",le=~"1(\\.0)?"}[6h]))

or

vector(0)

)

+

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward",scope="namespace",le=~"5(\\.0)?"}[6h]))

+

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward",scope="cluster",le=~"30(\\.0)?"}[6h]))

)

)

+

# errors

sum by (cluster) (rate(apiserver_request_total{job="apiserver",verb=~"LIST|GET",code=~"5.."}[6h]))

)

/

sum by (cluster)

(rate(apiserver_request_total{job="apiserver",verb=~"LIST|GET"}[6h]))

labels:

verb: read

record: apiserver_request:burnrate6h

- expr: >-

(

(

# too slow

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_count{job="apiserver",verb=~"POST|PUT|PATCH|DELETE",subresource!~"proxy|attach|log|exec|portforward"}[1d]))

-

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"POST|PUT|PATCH|DELETE",subresource!~"proxy|attach|log|exec|portforward",le=~"1(\\.0)?"}[1d]))

)

+

sum by (cluster) (rate(apiserver_request_total{job="apiserver",verb=~"POST|PUT|PATCH|DELETE",code=~"5.."}[1d]))

)

/

sum by (cluster)

(rate(apiserver_request_total{job="apiserver",verb=~"POST|PUT|PATCH|DELETE"}[1d]))

labels:

verb: write

record: apiserver_request:burnrate1d

- expr: >-

(

(

# too slow

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_count{job="apiserver",verb=~"POST|PUT|PATCH|DELETE",subresource!~"proxy|attach|log|exec|portforward"}[1h]))

-

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"POST|PUT|PATCH|DELETE",subresource!~"proxy|attach|log|exec|portforward",le=~"1(\\.0)?"}[1h]))

)

+

sum by (cluster) (rate(apiserver_request_total{job="apiserver",verb=~"POST|PUT|PATCH|DELETE",code=~"5.."}[1h]))

)

/

sum by (cluster)

(rate(apiserver_request_total{job="apiserver",verb=~"POST|PUT|PATCH|DELETE"}[1h]))

labels:

verb: write

record: apiserver_request:burnrate1h

- expr: >-

(

(

# too slow

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_count{job="apiserver",verb=~"POST|PUT|PATCH|DELETE",subresource!~"proxy|attach|log|exec|portforward"}[2h]))

-

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"POST|PUT|PATCH|DELETE",subresource!~"proxy|attach|log|exec|portforward",le=~"1(\\.0)?"}[2h]))

)

+

sum by (cluster) (rate(apiserver_request_total{job="apiserver",verb=~"POST|PUT|PATCH|DELETE",code=~"5.."}[2h]))

)

/

sum by (cluster)

(rate(apiserver_request_total{job="apiserver",verb=~"POST|PUT|PATCH|DELETE"}[2h]))

labels:

verb: write

record: apiserver_request:burnrate2h

- expr: >-

(

(

# too slow

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_count{job="apiserver",verb=~"POST|PUT|PATCH|DELETE",subresource!~"proxy|attach|log|exec|portforward"}[30m]))

-

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"POST|PUT|PATCH|DELETE",subresource!~"proxy|attach|log|exec|portforward",le=~"1(\\.0)?"}[30m]))

)

+

sum by (cluster) (rate(apiserver_request_total{job="apiserver",verb=~"POST|PUT|PATCH|DELETE",code=~"5.."}[30m]))

)

/

sum by (cluster)

(rate(apiserver_request_total{job="apiserver",verb=~"POST|PUT|PATCH|DELETE"}[30m]))

labels:

verb: write

record: apiserver_request:burnrate30m

- expr: >-

(

(

# too slow

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_count{job="apiserver",verb=~"POST|PUT|PATCH|DELETE",subresource!~"proxy|attach|log|exec|portforward"}[3d]))

-

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"POST|PUT|PATCH|DELETE",subresource!~"proxy|attach|log|exec|portforward",le=~"1(\\.0)?"}[3d]))

)

+

sum by (cluster) (rate(apiserver_request_total{job="apiserver",verb=~"POST|PUT|PATCH|DELETE",code=~"5.."}[3d]))

)

/

sum by (cluster)

(rate(apiserver_request_total{job="apiserver",verb=~"POST|PUT|PATCH|DELETE"}[3d]))

labels:

verb: write

record: apiserver_request:burnrate3d

- expr: >-

(

(

# too slow

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_count{job="apiserver",verb=~"POST|PUT|PATCH|DELETE",subresource!~"proxy|attach|log|exec|portforward"}[5m]))

-

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"POST|PUT|PATCH|DELETE",subresource!~"proxy|attach|log|exec|portforward",le=~"1(\\.0)?"}[5m]))

)

+

sum by (cluster) (rate(apiserver_request_total{job="apiserver",verb=~"POST|PUT|PATCH|DELETE",code=~"5.."}[5m]))

)

/

sum by (cluster)

(rate(apiserver_request_total{job="apiserver",verb=~"POST|PUT|PATCH|DELETE"}[5m]))

labels:

verb: write

record: apiserver_request:burnrate5m

- expr: >-

(

(

# too slow

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_count{job="apiserver",verb=~"POST|PUT|PATCH|DELETE",subresource!~"proxy|attach|log|exec|portforward"}[6h]))

-

sum by (cluster) (rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"POST|PUT|PATCH|DELETE",subresource!~"proxy|attach|log|exec|portforward",le=~"1(\\.0)?"}[6h]))

)

+

sum by (cluster) (rate(apiserver_request_total{job="apiserver",verb=~"POST|PUT|PATCH|DELETE",code=~"5.."}[6h]))

)

/

sum by (cluster)

(rate(apiserver_request_total{job="apiserver",verb=~"POST|PUT|PATCH|DELETE"}[6h]))

labels:

verb: write

record: apiserver_request:burnrate6h

- name: kube-apiserver-histogram.rules

rules:

- expr: >-

histogram_quantile(0.99, sum by (le,resource,cluster)

(rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"LIST|GET",subresource!~"proxy|attach|log|exec|portforward"}[5m])))

> 0

labels:

quantile: '0.99'

verb: read

record: >-

cluster_quantile:apiserver_request_sli_duration_seconds:histogram_quantile

- expr: >-

histogram_quantile(0.99, sum by (le,resource,cluster)

(rate(apiserver_request_sli_duration_seconds_bucket{job="apiserver",verb=~"POST|PUT|PATCH|DELETE",subresource!~"proxy|attach|log|exec|portforward"}[5m])))

> 0

labels:

quantile: '0.99'

verb: write

record: >-

cluster_quantile:apiserver_request_sli_duration_seconds:histogram_quantile

- name: kube-apiserver-slos

rules:

- alert: KubeAPIErrorBudgetBurn

annotations:

description: >-

The API server is burning too much error budget on cluster {{

$labels.cluster }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubeapierrorbudgetburn

summary: The API server is burning too much error budget.

expr: |-

sum by (cluster) (apiserver_request:burnrate1h) > (14.40 * 0.01000)

and on (cluster)

sum by (cluster) (apiserver_request:burnrate5m) > (14.40 * 0.01000)

for: 2m

labels:

long: 1h

severity: critical

short: 5m

- alert: KubeAPIErrorBudgetBurn

annotations:

description: >-

The API server is burning too much error budget on cluster {{

$labels.cluster }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubeapierrorbudgetburn

summary: The API server is burning too much error budget.

expr: |-

sum by (cluster) (apiserver_request:burnrate6h) > (6.00 * 0.01000)

and on (cluster)

sum by (cluster) (apiserver_request:burnrate30m) > (6.00 * 0.01000)

for: 15m

labels:

long: 6h

severity: critical

short: 30m

- alert: KubeAPIErrorBudgetBurn

annotations:

description: >-

The API server is burning too much error budget on cluster {{

$labels.cluster }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubeapierrorbudgetburn

summary: The API server is burning too much error budget.

expr: |-

sum by (cluster) (apiserver_request:burnrate1d) > (3.00 * 0.01000)

and on (cluster)

sum by (cluster) (apiserver_request:burnrate2h) > (3.00 * 0.01000)

for: 1h

labels:

long: 1d

severity: warning

short: 2h

- alert: KubeAPIErrorBudgetBurn

annotations:

description: >-

The API server is burning too much error budget on cluster {{

$labels.cluster }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubeapierrorbudgetburn

summary: The API server is burning too much error budget.

expr: |-

sum by (cluster) (apiserver_request:burnrate3d) > (1.00 * 0.01000)

and on (cluster)

sum by (cluster) (apiserver_request:burnrate6h) > (1.00 * 0.01000)

for: 3h

labels:

long: 3d

severity: warning

short: 6h

- name: kube-prometheus-general.rules

rules:

- expr: count without(instance, pod, node) (up == 1)

record: count:up1

- expr: count without(instance, pod, node) (up == 0)

record: count:up0

- name: kube-prometheus-node-recording.rules

rules:

- expr: >-

sum(rate(node_cpu_seconds_total{mode!="idle",mode!="iowait",mode!="steal"}[3m]))

BY (instance,cluster)

record: instance:node_cpu:rate:sum

- expr: >-

sum(rate(node_network_receive_bytes_total[3m])) BY

(instance,cluster)

record: instance:node_network_receive_bytes:rate:sum

- expr: >-

sum(rate(node_network_transmit_bytes_total[3m])) BY

(instance,cluster)

record: instance:node_network_transmit_bytes:rate:sum

- expr: >-

sum(rate(node_cpu_seconds_total{mode!="idle",mode!="iowait",mode!="steal"}[5m]))

WITHOUT (cpu, mode) / ON (instance,cluster) GROUP_LEFT()

count(sum(node_cpu_seconds_total) BY (instance,cpu,cluster)) BY

(instance,cluster)

record: instance:node_cpu:ratio

- expr: >-

sum(rate(node_cpu_seconds_total{mode!="idle",mode!="iowait",mode!="steal"}[5m]))

BY (cluster)

record: cluster:node_cpu:sum_rate5m

- expr: >-

cluster:node_cpu:sum_rate5m / count(sum(node_cpu_seconds_total) BY

(instance,cpu,cluster)) BY (cluster)

record: cluster:node_cpu:ratio

- name: kube-scheduler.rules

rules:

- expr: >-

histogram_quantile(0.99,

sum(rate(scheduler_e2e_scheduling_duration_seconds_bucket{job="kube-scheduler"}[5m]))

without(instance, pod))

labels:

quantile: '0.99'

record: >-

cluster_quantile:scheduler_e2e_scheduling_duration_seconds:histogram_quantile

- expr: >-

histogram_quantile(0.99,

sum(rate(scheduler_scheduling_algorithm_duration_seconds_bucket{job="kube-scheduler"}[5m]))

without(instance, pod))

labels:

quantile: '0.99'

record: >-

cluster_quantile:scheduler_scheduling_algorithm_duration_seconds:histogram_quantile

- expr: >-

histogram_quantile(0.99,

sum(rate(scheduler_binding_duration_seconds_bucket{job="kube-scheduler"}[5m]))

without(instance, pod))

labels:

quantile: '0.99'

record: >-

cluster_quantile:scheduler_binding_duration_seconds:histogram_quantile

- expr: >-

histogram_quantile(0.9,

sum(rate(scheduler_e2e_scheduling_duration_seconds_bucket{job="kube-scheduler"}[5m]))

without(instance, pod))

labels:

quantile: '0.9'

record: >-

cluster_quantile:scheduler_e2e_scheduling_duration_seconds:histogram_quantile

- expr: >-

histogram_quantile(0.9,

sum(rate(scheduler_scheduling_algorithm_duration_seconds_bucket{job="kube-scheduler"}[5m]))

without(instance, pod))

labels:

quantile: '0.9'

record: >-

cluster_quantile:scheduler_scheduling_algorithm_duration_seconds:histogram_quantile

- expr: >-

histogram_quantile(0.9,

sum(rate(scheduler_binding_duration_seconds_bucket{job="kube-scheduler"}[5m]))

without(instance, pod))

labels:

quantile: '0.9'

record: >-

cluster_quantile:scheduler_binding_duration_seconds:histogram_quantile

- expr: >-

histogram_quantile(0.5,

sum(rate(scheduler_e2e_scheduling_duration_seconds_bucket{job="kube-scheduler"}[5m]))

without(instance, pod))

labels:

quantile: '0.5'

record: >-

cluster_quantile:scheduler_e2e_scheduling_duration_seconds:histogram_quantile

- expr: >-

histogram_quantile(0.5,

sum(rate(scheduler_scheduling_algorithm_duration_seconds_bucket{job="kube-scheduler"}[5m]))

without(instance, pod))

labels:

quantile: '0.5'

record: >-

cluster_quantile:scheduler_scheduling_algorithm_duration_seconds:histogram_quantile

- expr: >-

histogram_quantile(0.5,

sum(rate(scheduler_binding_duration_seconds_bucket{job="kube-scheduler"}[5m]))

without(instance, pod))

labels:

quantile: '0.5'

record: >-

cluster_quantile:scheduler_binding_duration_seconds:histogram_quantile

- name: kube-state-metrics

rules:

- alert: KubeStateMetricsListErrors

annotations:

description: >-

kube-state-metrics is experiencing errors at an elevated rate in

list operations. This is likely causing it to not be able to

expose metrics about Kubernetes objects correctly or at all.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kube-state-metrics/kubestatemetricslisterrors

summary: kube-state-metrics is experiencing errors in list operations.

expr: >-

(sum(rate(kube_state_metrics_list_total{job="kube-state-metrics",result="error"}[5m]))

by (cluster)

/

sum(rate(kube_state_metrics_list_total{job="kube-state-metrics"}[5m]))

by (cluster))

> 0.01

for: 15m

labels:

severity: critical

- alert: KubeStateMetricsWatchErrors

annotations:

description: >-

kube-state-metrics is experiencing errors at an elevated rate in

watch operations. This is likely causing it to not be able to

expose metrics about Kubernetes objects correctly or at all.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kube-state-metrics/kubestatemetricswatcherrors

summary: kube-state-metrics is experiencing errors in watch operations.

expr: >-

(sum(rate(kube_state_metrics_watch_total{job="kube-state-metrics",result="error"}[5m]))

by (cluster)

/

sum(rate(kube_state_metrics_watch_total{job="kube-state-metrics"}[5m]))

by (cluster))

> 0.01

for: 15m

labels:

severity: critical

- alert: KubeStateMetricsShardingMismatch

annotations:

description: >-

kube-state-metrics pods are running with different --total-shards

configuration, some Kubernetes objects may be exposed multiple

times or not exposed at all.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kube-state-metrics/kubestatemetricsshardingmismatch

summary: kube-state-metrics sharding is misconfigured.

expr: >-

stdvar (kube_state_metrics_total_shards{job="kube-state-metrics"})

by (cluster) != 0

for: 15m

labels:

severity: critical

- alert: KubeStateMetricsShardsMissing

annotations:

description: >-

kube-state-metrics shards are missing, some Kubernetes objects are

not being exposed.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kube-state-metrics/kubestatemetricsshardsmissing

summary: kube-state-metrics shards are missing.

expr: >-

2^max(kube_state_metrics_total_shards{job="kube-state-metrics"}) by

(cluster) - 1

-

sum( 2 ^ max by (shard_ordinal,cluster)

(kube_state_metrics_shard_ordinal{job="kube-state-metrics"}) ) by

(cluster)

!= 0

for: 15m

labels:

severity: critical

- name: kubelet.rules

rules:

- expr: >-

histogram_quantile(0.99,

sum(rate(kubelet_pleg_relist_duration_seconds_bucket{job="kubelet",

metrics_path="/metrics"}[5m])) by (instance,le,cluster) * on

(instance,cluster) group_left(node) kubelet_node_name{job="kubelet",

metrics_path="/metrics"})

labels:

quantile: '0.99'

record: >-

node_quantile:kubelet_pleg_relist_duration_seconds:histogram_quantile

- expr: >-

histogram_quantile(0.9,

sum(rate(kubelet_pleg_relist_duration_seconds_bucket{job="kubelet",

metrics_path="/metrics"}[5m])) by (instance,le,cluster) * on

(instance,cluster) group_left(node) kubelet_node_name{job="kubelet",

metrics_path="/metrics"})

labels:

quantile: '0.9'

record: >-

node_quantile:kubelet_pleg_relist_duration_seconds:histogram_quantile

- expr: >-

histogram_quantile(0.5,

sum(rate(kubelet_pleg_relist_duration_seconds_bucket{job="kubelet",

metrics_path="/metrics"}[5m])) by (instance,le,cluster) * on

(instance,cluster) group_left(node) kubelet_node_name{job="kubelet",

metrics_path="/metrics"})

labels:

quantile: '0.5'

record: >-

node_quantile:kubelet_pleg_relist_duration_seconds:histogram_quantile

- name: kubernetes-apps

rules:

- alert: KubePodCrashLooping

annotations:

description: >-

Pod {{ $labels.namespace }}/{{ $labels.pod }} ({{

$labels.container }}) is in waiting state (reason:

"CrashLoopBackOff") on cluster {{ $labels.cluster }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubepodcrashlooping

summary: Pod is crash looping.

expr: >-

max_over_time(kube_pod_container_status_waiting_reason{reason="CrashLoopBackOff",

job="kube-state-metrics", namespace=~".*"}[5m]) >= 1

for: 15m

labels:

severity: warning

- alert: KubePodNotReady

annotations:

description: >-

Pod {{ $labels.namespace }}/{{ $labels.pod }} has been in a

non-ready state for longer than 15 minutes on cluster {{

$labels.cluster }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubepodnotready

summary: Pod has been in a non-ready state for more than 15 minutes.

expr: |-

sum by (namespace,pod,cluster) (

max by (namespace,pod,cluster) (

kube_pod_status_phase{job="kube-state-metrics", namespace=~".*", phase=~"Pending|Unknown|Failed"}

) * on (namespace,pod,cluster) group_left(owner_kind) topk by (namespace,pod,cluster) (

1, max by (namespace,pod,owner_kind,cluster) (kube_pod_owner{owner_kind!="Job"})

)

) > 0

for: 15m

labels:

severity: warning

- alert: KubeDeploymentGenerationMismatch

annotations:

description: >-

Deployment generation for {{ $labels.namespace }}/{{

$labels.deployment }} does not match, this indicates that the

Deployment has failed but has not been rolled back on cluster {{

$labels.cluster }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubedeploymentgenerationmismatch

summary: Deployment generation mismatch due to possible roll-back

expr: >-

kube_deployment_status_observed_generation{job="kube-state-metrics",

namespace=~".*"}

!=

kube_deployment_metadata_generation{job="kube-state-metrics",

namespace=~".*"}

for: 15m

labels:

severity: warning

- alert: KubeDeploymentReplicasMismatch

annotations:

description: >-

Deployment {{ $labels.namespace }}/{{ $labels.deployment }} has

not matched the expected number of replicas for longer than 15

minutes on cluster {{ $labels.cluster }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubedeploymentreplicasmismatch

summary: Deployment has not matched the expected number of replicas.

expr: |-

(

kube_deployment_spec_replicas{job="kube-state-metrics", namespace=~".*"}

>

kube_deployment_status_replicas_available{job="kube-state-metrics", namespace=~".*"}

) and (

changes(kube_deployment_status_replicas_updated{job="kube-state-metrics", namespace=~".*"}[10m])

==

0

)

for: 15m

labels:

severity: warning

- alert: KubeDeploymentRolloutStuck

annotations:

description: >-

Rollout of deployment {{ $labels.namespace }}/{{

$labels.deployment }} is not progressing for longer than 15

minutes on cluster {{ $labels.cluster }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubedeploymentrolloutstuck

summary: Deployment rollout is not progressing.

expr: >-

kube_deployment_status_condition{condition="Progressing",

status="false",job="kube-state-metrics", namespace=~".*"}

!= 0

for: 15m

labels:

severity: warning

- alert: KubeStatefulSetReplicasMismatch

annotations:

description: >-

StatefulSet {{ $labels.namespace }}/{{ $labels.statefulset }} has

not matched the expected number of replicas for longer than 15

minutes on cluster {{ $labels.cluster }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubestatefulsetreplicasmismatch

summary: StatefulSet has not matched the expected number of replicas.

expr: |-

(

kube_statefulset_status_replicas_ready{job="kube-state-metrics", namespace=~".*"}

!=

kube_statefulset_status_replicas{job="kube-state-metrics", namespace=~".*"}

) and (

changes(kube_statefulset_status_replicas_updated{job="kube-state-metrics", namespace=~".*"}[10m])

==

0

)

for: 15m

labels:

severity: warning

- alert: KubeStatefulSetGenerationMismatch

annotations:

description: >-

StatefulSet generation for {{ $labels.namespace }}/{{

$labels.statefulset }} does not match, this indicates that the

StatefulSet has failed but has not been rolled back on cluster {{

$labels.cluster }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubestatefulsetgenerationmismatch

summary: StatefulSet generation mismatch due to possible roll-back

expr: >-

kube_statefulset_status_observed_generation{job="kube-state-metrics",

namespace=~".*"}

!=

kube_statefulset_metadata_generation{job="kube-state-metrics",

namespace=~".*"}

for: 15m

labels:

severity: warning

- alert: KubeStatefulSetUpdateNotRolledOut

annotations:

description: >-

StatefulSet {{ $labels.namespace }}/{{ $labels.statefulset }}

update has not been rolled out on cluster {{ $labels.cluster }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubestatefulsetupdatenotrolledout

summary: StatefulSet update has not been rolled out.

expr: |-

(

max by (namespace,statefulset,job,cluster) (

kube_statefulset_status_current_revision{job="kube-state-metrics", namespace=~".*"}

unless

kube_statefulset_status_update_revision{job="kube-state-metrics", namespace=~".*"}

)

*

(

kube_statefulset_replicas{job="kube-state-metrics", namespace=~".*"}

!=

kube_statefulset_status_replicas_updated{job="kube-state-metrics", namespace=~".*"}

)

) and (

changes(kube_statefulset_status_replicas_updated{job="kube-state-metrics", namespace=~".*"}[5m])

==

0

)

for: 15m

labels:

severity: warning

- alert: KubeDaemonSetRolloutStuck

annotations:

description: >-

DaemonSet {{ $labels.namespace }}/{{ $labels.daemonset }} has not

finished or progressed for at least 15m on cluster {{

$labels.cluster }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubedaemonsetrolloutstuck

summary: DaemonSet rollout is stuck.

expr: |-

(

(

kube_daemonset_status_current_number_scheduled{job="kube-state-metrics", namespace=~".*"}

!=

kube_daemonset_status_desired_number_scheduled{job="kube-state-metrics", namespace=~".*"}

) or (

kube_daemonset_status_number_misscheduled{job="kube-state-metrics", namespace=~".*"}

!=

0

) or (

kube_daemonset_status_updated_number_scheduled{job="kube-state-metrics", namespace=~".*"}

!=

kube_daemonset_status_desired_number_scheduled{job="kube-state-metrics", namespace=~".*"}

) or (

kube_daemonset_status_number_available{job="kube-state-metrics", namespace=~".*"}

!=

kube_daemonset_status_desired_number_scheduled{job="kube-state-metrics", namespace=~".*"}

)

) and (

changes(kube_daemonset_status_updated_number_scheduled{job="kube-state-metrics", namespace=~".*"}[5m])

==

0

)

for: 15m

labels:

severity: warning

- alert: KubeContainerWaiting

annotations:

description: >-

pod/{{ $labels.pod }} in namespace {{ $labels.namespace }} on

container {{ $labels.container}} has been in waiting state for

longer than 1 hour. (reason: "{{ $labels.reason }}") on cluster {{

$labels.cluster }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubecontainerwaiting

summary: Pod container waiting longer than 1 hour

expr: >-

kube_pod_container_status_waiting_reason{reason!="CrashLoopBackOff",

job="kube-state-metrics", namespace=~".*"} > 0

for: 1h

labels:

severity: warning

- alert: KubeDaemonSetNotScheduled

annotations:

description: >-

{{ $value }} Pods of DaemonSet {{ $labels.namespace }}/{{

$labels.daemonset }} are not scheduled on cluster {{

$labels.cluster }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubedaemonsetnotscheduled

summary: DaemonSet pods are not scheduled.

expr: >-

kube_daemonset_status_desired_number_scheduled{job="kube-state-metrics",

namespace=~".*"}

-

kube_daemonset_status_current_number_scheduled{job="kube-state-metrics",

namespace=~".*"} > 0

for: 10m

labels:

severity: warning

- alert: KubeDaemonSetMisScheduled

annotations:

description: >-

{{ $value }} Pods of DaemonSet {{ $labels.namespace }}/{{

$labels.daemonset }} are running where they are not supposed to

run on cluster {{ $labels.cluster }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubedaemonsetmisscheduled

summary: DaemonSet pods are misscheduled.

expr: >-

kube_daemonset_status_number_misscheduled{job="kube-state-metrics",

namespace=~".*"} > 0

for: 15m

labels:

severity: warning

- alert: KubeJobNotCompleted

annotations:

description: >-

Job {{ $labels.namespace }}/{{ $labels.job_name }} is taking more

than {{ "43200" | humanizeDuration }} to complete on cluster {{

$labels.cluster }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubejobnotcompleted

summary: Job did not complete in time

expr: >-

time() - max by (namespace,job_name,cluster)

(kube_job_status_start_time{job="kube-state-metrics",

namespace=~".*"}

and

kube_job_status_active{job="kube-state-metrics", namespace=~".*"} >

0) > 43200

labels:

severity: warning

- alert: KubeJobFailed

annotations:

description: >-

Job {{ $labels.namespace }}/{{ $labels.job_name }} failed to

complete. Removing failed job after investigation should clear

this alert on cluster {{ $labels.cluster }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubejobfailed

summary: Job failed to complete.

expr: kube_job_failed{job="kube-state-metrics", namespace=~".*"} > 0

for: 15m

labels:

severity: warning

- alert: KubeHpaReplicasMismatch

annotations:

description: >-

HPA {{ $labels.namespace }}/{{ $labels.horizontalpodautoscaler }}

has not matched the desired number of replicas for longer than 15

minutes on cluster {{ $labels.cluster }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubehpareplicasmismatch

summary: HPA has not matched desired number of replicas.

expr: >-

(kube_horizontalpodautoscaler_status_desired_replicas{job="kube-state-metrics",

namespace=~".*"}

!=

kube_horizontalpodautoscaler_status_current_replicas{job="kube-state-metrics",

namespace=~".*"})

and

(kube_horizontalpodautoscaler_status_current_replicas{job="kube-state-metrics",

namespace=~".*"}

>

kube_horizontalpodautoscaler_spec_min_replicas{job="kube-state-metrics",

namespace=~".*"})

and

(kube_horizontalpodautoscaler_status_current_replicas{job="kube-state-metrics",

namespace=~".*"}

<

kube_horizontalpodautoscaler_spec_max_replicas{job="kube-state-metrics",

namespace=~".*"})

and

changes(kube_horizontalpodautoscaler_status_current_replicas{job="kube-state-metrics",

namespace=~".*"}[15m]) == 0

for: 15m

labels:

severity: warning

- alert: KubeHpaMaxedOut

annotations:

description: >-

HPA {{ $labels.namespace }}/{{ $labels.horizontalpodautoscaler }}

has been running at max replicas for longer than 15 minutes on

cluster {{ $labels.cluster }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubehpamaxedout

summary: HPA is running at max replicas

expr: >-

kube_horizontalpodautoscaler_status_current_replicas{job="kube-state-metrics",

namespace=~".*"}

==

kube_horizontalpodautoscaler_spec_max_replicas{job="kube-state-metrics",

namespace=~".*"}

for: 15m

labels:

severity: warning

- name: kubernetes-resources

rules:

- alert: KubeCPUOvercommit

annotations:

description: >-

Cluster {{ $labels.cluster }} has overcommitted CPU resource

requests for Pods by {{ $value }} CPU shares and cannot tolerate

node failure.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubecpuovercommit

summary: Cluster has overcommitted CPU resource requests.

expr: >-

sum(namespace_cpu:kube_pod_container_resource_requests:sum{}) by

(cluster) -

(sum(kube_node_status_allocatable{job="kube-state-metrics",resource="cpu"})

by (cluster) -

max(kube_node_status_allocatable{job="kube-state-metrics",resource="cpu"})

by (cluster)) > 0

and

(sum(kube_node_status_allocatable{job="kube-state-metrics",resource="cpu"})

by (cluster) -

max(kube_node_status_allocatable{job="kube-state-metrics",resource="cpu"})

by (cluster)) > 0

for: 10m

labels:

severity: warning

- alert: KubeMemoryOvercommit

annotations:

description: >-

Cluster {{ $labels.cluster }} has overcommitted memory resource

requests for Pods by {{ $value | humanize }} bytes and cannot

tolerate node failure.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubememoryovercommit

summary: Cluster has overcommitted memory resource requests.

expr: >-

sum(namespace_memory:kube_pod_container_resource_requests:sum{}) by

(cluster) - (sum(kube_node_status_allocatable{resource="memory",

job="kube-state-metrics"}) by (cluster) -

max(kube_node_status_allocatable{resource="memory",

job="kube-state-metrics"}) by (cluster)) > 0

and

(sum(kube_node_status_allocatable{resource="memory",

job="kube-state-metrics"}) by (cluster) -

max(kube_node_status_allocatable{resource="memory",

job="kube-state-metrics"}) by (cluster)) > 0

for: 10m

labels:

severity: warning

- alert: KubeCPUQuotaOvercommit

annotations:

description: >-

Cluster {{ $labels.cluster }} has overcommitted CPU resource

requests for Namespaces.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubecpuquotaovercommit

summary: Cluster has overcommitted CPU resource requests.

expr: >-

sum(min without(resource)

(kube_resourcequota{job="kube-state-metrics", type="hard",

resource=~"(cpu|requests.cpu)"})) by (cluster)

/

sum(kube_node_status_allocatable{resource="cpu",

job="kube-state-metrics"}) by (cluster)

> 1.5

for: 5m

labels:

severity: warning

- alert: KubeMemoryQuotaOvercommit

annotations:

description: >-

Cluster {{ $labels.cluster }} has overcommitted memory resource

requests for Namespaces.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubememoryquotaovercommit

summary: Cluster has overcommitted memory resource requests.

expr: >-

sum(min without(resource)

(kube_resourcequota{job="kube-state-metrics", type="hard",

resource=~"(memory|requests.memory)"})) by (cluster)

/

sum(kube_node_status_allocatable{resource="memory",

job="kube-state-metrics"}) by (cluster)

> 1.5

for: 5m

labels:

severity: warning

- alert: KubeQuotaAlmostFull

annotations:

description: >-

Namespace {{ $labels.namespace }} is using {{ $value |

humanizePercentage }} of its {{ $labels.resource }} quota on

cluster {{ $labels.cluster }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubequotaalmostfull

summary: Namespace quota is going to be full.

expr: |-

kube_resourcequota{job="kube-state-metrics", type="used"}

/ ignoring(instance, job, type)

(kube_resourcequota{job="kube-state-metrics", type="hard"} > 0)

> 0.9 < 1

for: 15m

labels:

severity: info

- alert: KubeQuotaFullyUsed

annotations:

description: >-

Namespace {{ $labels.namespace }} is using {{ $value |

humanizePercentage }} of its {{ $labels.resource }} quota on

cluster {{ $labels.cluster }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubequotafullyused

summary: Namespace quota is fully used.

expr: |-

kube_resourcequota{job="kube-state-metrics", type="used"}

/ ignoring(instance, job, type)

(kube_resourcequota{job="kube-state-metrics", type="hard"} > 0)

== 1

for: 15m

labels:

severity: info

- alert: KubeQuotaExceeded

annotations:

description: >-

Namespace {{ $labels.namespace }} is using {{ $value |

humanizePercentage }} of its {{ $labels.resource }} quota on

cluster {{ $labels.cluster }}.

runbook_url: >-

https://runbooks.prometheus-operator.dev/runbooks/kubernetes/kubequotaexceeded

summary: Namespace quota has exceeded the limits.

expr: |-

kube_resourcequota{job="kube-state-metrics", type="used"}

/ ignoring(instance, job, type)